Why I'm Building a Database Engine in C#

When I tell people I’m building an ACID database engine in C#, the first reaction is always the same: “But what about GC pauses?”

It’s a fair question. Nobody builds high-performance database engines in .NET. The assumption is that you need C, C++, or Rust for this class of software — that managed languages are fundamentally disqualified from the microsecond-latency club.

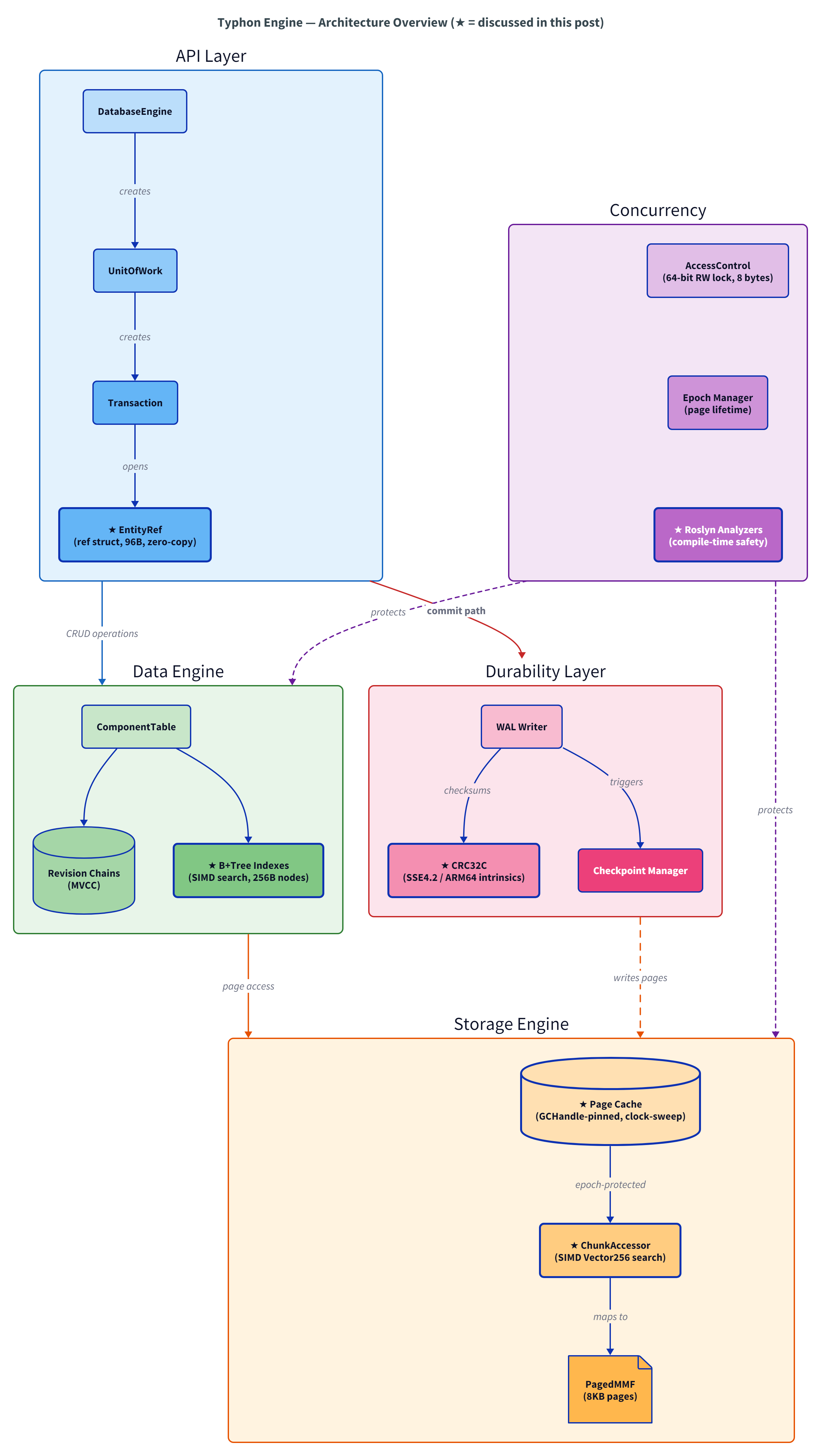

After 30 years of building real-time 3D engines and systems software, I chose C# anyway. The project is called Typhon: an embedded ACID database engine targeting 1–2 microsecond transaction commits. And the reasons behind that choice might change how you think about what C# can do.

The Case Against C# (Let’s Steel-Man It)

Before I make my case, let me honestly lay out every argument against choosing C# for this. These are real concerns, not strawmen.

The GC is non-deterministic. It can pause all your threads whenever it wants. For a database engine that promises microsecond latency, a 10ms Gen2 collection is catastrophic — that’s 10,000x your latency budget.

You don’t control memory layout. The managed heap decides where objects live. The GC can move them around during compaction. You can’t guarantee that your B+Tree nodes sit on cache-line boundaries, or that your page cache buffer won’t get relocated mid-transaction.

JIT warmup is real. The first call to any method pays the compilation cost. In a database engine, the first transaction after startup shouldn’t be 100x slower than the steady state.

Virtual dispatch and bounds checking add overhead. Every array access has a hidden bounds check. Every interface call goes through a vtable. In a hot loop processing millions of entities, these nanoseconds compound.

These are all legitimate problems. I won’t pretend they aren’t. But here’s what most people miss: modern C# has answers for every single one of them.

What Most People Don’t Know About C#

The C# that most developers know — classes, garbage collection, LINQ — is only half the language. There’s a whole other side that the .NET runtime team has been quietly building for a decade, and it looks nothing like what you’d expect.

unsafe gives you C-level control. Raw pointers, pointer arithmetic, stackalloc for stack buffers, fixed-size arrays — the JIT generates the same mov/cmp/jne instructions you’d get from C. Not “close to C.” The same instructions.

GCHandle.Alloc(Pinned) makes the GC irrelevant where it matters. You can pin byte arrays so the GC never moves them. Typhon’s entire page cache is pinned memory — the GC doesn’t touch it, doesn’t scan it, doesn’t move it. It’s just raw bytes at a fixed address, exactly like malloc in C.

ref struct eliminates heap allocations on hot paths. A ref struct can never escape to the heap. It lives on the stack, dies when the scope ends, and the GC never knows it existed. Typhon’s entity accessor (EntityRef) is a 96-byte ref struct — zero allocation, zero GC pressure.

Constrained generics give you true monomorphization. When you write where T : unmanaged, the JIT generates a separate native code path for each type parameter. sizeof(T) becomes a constant. Dead branches get eliminated. It’s the same optimization Rust gets from generics — not a runtime dispatch, but compile-time specialization.

Hardware intrinsics are first-class. System.Runtime.Intrinsics gives you Vector256, Sse42.Crc32, BitOperations.TrailingZeroCount — the same SIMD instructions available in C/C++, with the same performance, and runtime feature detection so you can fall back gracefully.

[StructLayout(Explicit)] gives you exact memory layout. Field offsets, padding, size — you control every byte. Cache-line alignment, false-sharing prevention, bit-packing — it’s all there.

This isn’t “C# trying to be C.” It’s C# providing a genuine systems programming layer on top of a best-in-class managed ecosystem.

What Typhon Actually Looks Like

Theory is nice, now let’s look at real code.

Hardware-accelerated WAL checksums

Every page written to the Write-Ahead Log needs a CRC32C checksum. Here’s what that looks like in C# — calling CPU instructions by name:

private static uint ComputePartial(uint crc, ReadOnlySpan<byte> data)

{

if (Sse42.X64.IsSupported) return ComputeSse42X64(crc, data);

if (Sse42.IsSupported) return ComputeSse42X32(crc, data);

if (ArmCrc32.Arm64.IsSupported) return ComputeArm64(crc, data);

return ComputeSoftware(crc, data);

}

private static uint ComputeSse42X64(uint crc, ReadOnlySpan<byte> data)

{

ulong crc64 = crc;

ref byte ptr = ref MemoryMarshal.GetReference(data);

int offset = 0;

int aligned = data.Length & ~7;

while (offset < aligned)

{

crc64 = Sse42.X64.Crc32(crc64, Unsafe.ReadUnaligned<ulong>(ref Unsafe.Add(ref ptr, offset)));

offset += 8;

}

uint crc32 = (uint)crc64;

while (offset < data.Length)

{

crc32 = Sse42.Crc32(crc32, Unsafe.Add(ref ptr, offset));

offset++;

}

return crc32;

}

Sse42.X64.Crc32() compiles to a single x86 crc32 instruction. The runtime detects the CPU capabilities, the JIT eliminates the dead branches, and what executes is the same code a C programmer would write — but with automatic fallback on platforms without SSE4.2. Result: ~1.3 µs per 8 KB page.

The SIMD chunk accessor

This is Typhon’s page cache hot path — a 16-slot cache that finds your data in one of three tiers:

// === ULTRA FAST PATH: MRU check ===

var mru = _mruSlot;

if (_pageIndices[mru] == pageIndex)

{

var headerOffset = pageIndex == 0 ? _rootHeaderOffset : _otherHeaderOffset;

return (byte*)_baseAddresses[mru] + headerOffset + offset * _stride;

}

// === FAST PATH: SIMD search through all 16 cached slots ===

fixed (int* indices = _pageIndices)

{

var target = Vector256.Create(pageIndex);

var v0 = Vector256.Load(indices);

var mask0 = Vector256.Equals(v0, target).ExtractMostSignificantBits();

if (mask0 != 0)

{

var slot = BitOperations.TrailingZeroCount(mask0);

return GetFromSlot(slot, pageIndex, offset, dirty);

}

var v1 = Vector256.Load(indices + 8);

var mask1 = Vector256.Equals(v1, target).ExtractMostSignificantBits();

if (mask1 != 0)

{

var slot = 8 + BitOperations.TrailingZeroCount(mask1);

return GetFromSlot(slot, pageIndex, offset, dirty);

}

}

The _pageIndices array is a fixed int[16] — 64 bytes, one cache line, packed for SIMD. One Vector256.Equals compares 8 page indices in a single instruction. The MRU fast path handles the common case (repeated access to the same page) with a single branch — branch predictor friendly, near-zero cost.

Zero-copy entity reads

EntityRef is a ref struct — stack-only, 96 bytes, with an inline fixed array caching component locations:

public unsafe ref struct EntityRef

{

internal readonly EntityId _id;

internal readonly ArchetypeMetadata _archetype;

internal readonly ArchetypeEngineState _engineState;

internal readonly Transaction _tx;

internal ushort _enabledBits;

internal readonly bool _writable;

private fixed int _locations[16]; // inline component chunk IDs

[MethodImpl(MethodImplOptions.AggressiveInlining)]

public ref readonly T Read<T>(Comp<T> comp) where T : unmanaged

{

byte slot = _archetype.GetSlot(comp._componentTypeId);

int chunkId = _locations[slot];

var table = _engineState.SlotToComponentTable[slot];

return ref _tx.ReadEcsComponentData<T>(table, chunkId);

}

}

That Read<T> call goes from method call → slot lookup → chunk ID → page cache → pointer arithmetic → ref readonly T pointing directly into a pinned memory page. Zero copies. Zero allocations. Zero GC involvement. The where T : unmanaged constraint means the JIT knows the exact layout — it compiles to pointer arithmetic, nothing more.

JIT-specialized hash functions

Even the hash functions exploit the JIT. Since sizeof(TKey) is a compile-time constant for constrained generics, the dead branches vanish:

[MethodImpl(MethodImplOptions.AggressiveInlining)]

internal static uint ComputeHash<TKey>(TKey key) where TKey : unmanaged

{

if (sizeof(TKey) == 4) return FastHash32(Unsafe.As<TKey, uint>(ref key));

if (sizeof(TKey) == 8) return XxHash32_8Bytes(Unsafe.As<TKey, long>(ref key));

return XxHash32_Bytes((byte*)Unsafe.AsPointer(ref key), sizeof(TKey));

}

When you call ComputeHash<int>(42), the JIT generates just the 4-byte path. The other two branches are completely eliminated. This is real monomorphization, not runtime dispatch.

The Productivity Argument

A database engine is more than its hot path. Around the core engine sits a large shell of infrastructure: configuration management, structured logging, telemetry, dependency injection, testing, benchmarking.

In C or Rust, you’d build much of this yourself or stitch together crates/libraries with varying quality. In .NET, this is production-grade and free: ILogger and OpenTelemetry for observability, BenchmarkDotNet for rigorous micro-benchmarks, NUnit for testing, IConfiguration for settings. All well-documented, all interoperable, all maintained by Microsoft or battle-tested OSS communities.

For a solo developer building a database engine, this is a genuine competitive advantage. I spend my time on concurrency primitives and page cache eviction, not on reinventing a logging framework.

It’s the Memory Layout, Not the Language

Here’s the insight that years of real-time 3D engines taught me: the bottleneck in a database engine is memory access patterns, not instruction throughput.

A cache miss to DRAM on a Ryzen 7950X costs 61–73 nanoseconds. That’s ~250 CPU cycles doing nothing, waiting for data. A CAS operation hitting L1 costs 1.4 nanoseconds. The ratio is 50:1.

No amount of “zero-cost abstractions” in your language can save you if your data structures cause cache misses. Conversely, if your data layout is cache-friendly — contiguous, aligned, predictable access patterns — the language barely matters. C# with unsafe generates identical machine code to C on hot paths. The JIT is that good.

What matters is:

- Cache-line awareness: Typhon’s B+Tree nodes are 128 bytes — two cache lines. The stride prefetcher on Zen4 covers the second line automatically. This alone cut insert latency by 53% and lookup latency by 30% versus 64-byte nodes.

- Data-oriented design: Structure of Arrays over Array of Structures. SIMD-friendly layouts. Blittable types only.

- Minimizing indirections: Every pointer chase is a potential cache miss. The SIMD chunk accessor’s MRU hit avoids the chase entirely.

The language you write in matters far less than the memory layout you design.

The Numbers

All measurements on a Ryzen 9 7950X, .NET 10.0, BenchmarkDotNet, release configuration.

| Operation | Latency | Throughput |

|---|---|---|

| CRUD lifecycle MVCC (spawn, read, update, destroy, commit) | 1.2 µs | 830K ops/sec |

| 90 reads/10 updates workload (100 ops per tx, MVCC) | 22 µs | ~4.5M entity-ops/sec |

| B+Tree lookup (hit) | 267 ns | 3.7M ops/sec |

| B+Tree sequential scan (per key) | 2.1 ns | 479M keys/sec |

| Uncontended lock acquire | 7.8 ns | 128M ops/sec |

| Page cache hit | 5.3 ns | — |

Context: an uncontended CAS on Zen4 costs 1.4 ns. A DRAM round-trip costs 61–73 ns. Typhon’s lock acquire (7.8 ns) is about 5 CAS operations — tight, considering it handles shared/exclusive arbitration with waiter tracking. The 267 ns B+Tree lookup implies 6–7 memory accesses, which matches a tree traversal through L2/L3 cache.

These are early alpha numbers. There’s room to improve. But they validate the core thesis: C# is not the bottleneck.

Trade-offs

No choice is without cost. Here’s what I’d tell someone considering the same path.

Memory safety is on you. In unsafe blocks, you can corrupt memory, dereference bad pointers, overflow buffers — the compiler won’t save you. Span<T> is a slightly slower but totally safe alternative.

The GC hasn’t been a problem — but it could be. By pinning the page cache and using ref struct on hot paths, Gen2 collections are rare and cheap. But I won’t pretend this is guaranteed. A workload that allocates heavily in managed code between transactions could still see pauses. The answer is discipline: don’t allocate on hot paths. The language lets you — it just doesn’t force you.

“But Rust would give you compile-time safety.” True — the borrow checker catches ownership and lifetime bugs that unsafe C# can’t. But C# has a trick Rust doesn’t: Roslyn analyzers. I wrote a custom analyzer suite (TYPHON001–007) that enforces domain-specific safety rules as compiler errors:

[NoCopy]attribute + analyzer: performance-critical structs likeChunkAccessorcannot be passed by value — the compiler errors if you forgetref. This is the same guarantee Rust’s borrow checker gives for move semantics, but scoped to the types that actually matter.- Ownership tracking: if you create a

ChunkAccessororTransactionand don’t dispose it, that’s a compiler error — not a runtime leak. The analyzer tracks ownership transfers through assignments, returns, andref/outparameters,[return: TransfersOwnership]on a method helps to express ownership transfer for the analyzer to act accordingly. - Disposal completeness: if your type holds a critical disposable field and your

Dispose()method misses it or has an early return that skips it — compiler error.

// This is a compile-time error in Typhon — TYPHON001

void Process(ChunkAccessor accessor) { ... } // ✗ Error: must be passed by ref

void Process(ref ChunkAccessor accessor) { ... } // ✓ OK

You don’t get Rust’s safety for free in C#. But you can build the exact subset you need as compiler errors, tailored to your domain. And unlike Rust’s borrow checker, these rules carry domain context in the diagnostics: “causes page cache deadlock” is more actionable than “value moved here.”

Rust’s ecosystem for the surrounding infrastructure (logging, DI, configuration, testing) is also less mature than .NET’s, and as a solo developer, my velocity matters. I chose the language where I ship faster.

JIT warmup is real but manageable. The first few transactions after cold start are slower. For an embedded engine (no separate server process), this is acceptable — the host application typically has its own warmup. For a server database, you’d want tiered compilation or AOT.

What’s Next

In the next post, I’ll explain why an ACID database engine borrows its storage architecture from game engines — specifically the Entity-Component-System pattern. Game engines and databases are solving the same fundamental problem: managing structured data with extreme performance constraints. They just evolved completely different solutions.

If you want to follow along, the best way is to star the repo or subscribe to the RSS feed.

This is Post #1 in a series about building a database engine in C#. Next up: “What Game Engines Know About Data That Databases Forgot”.