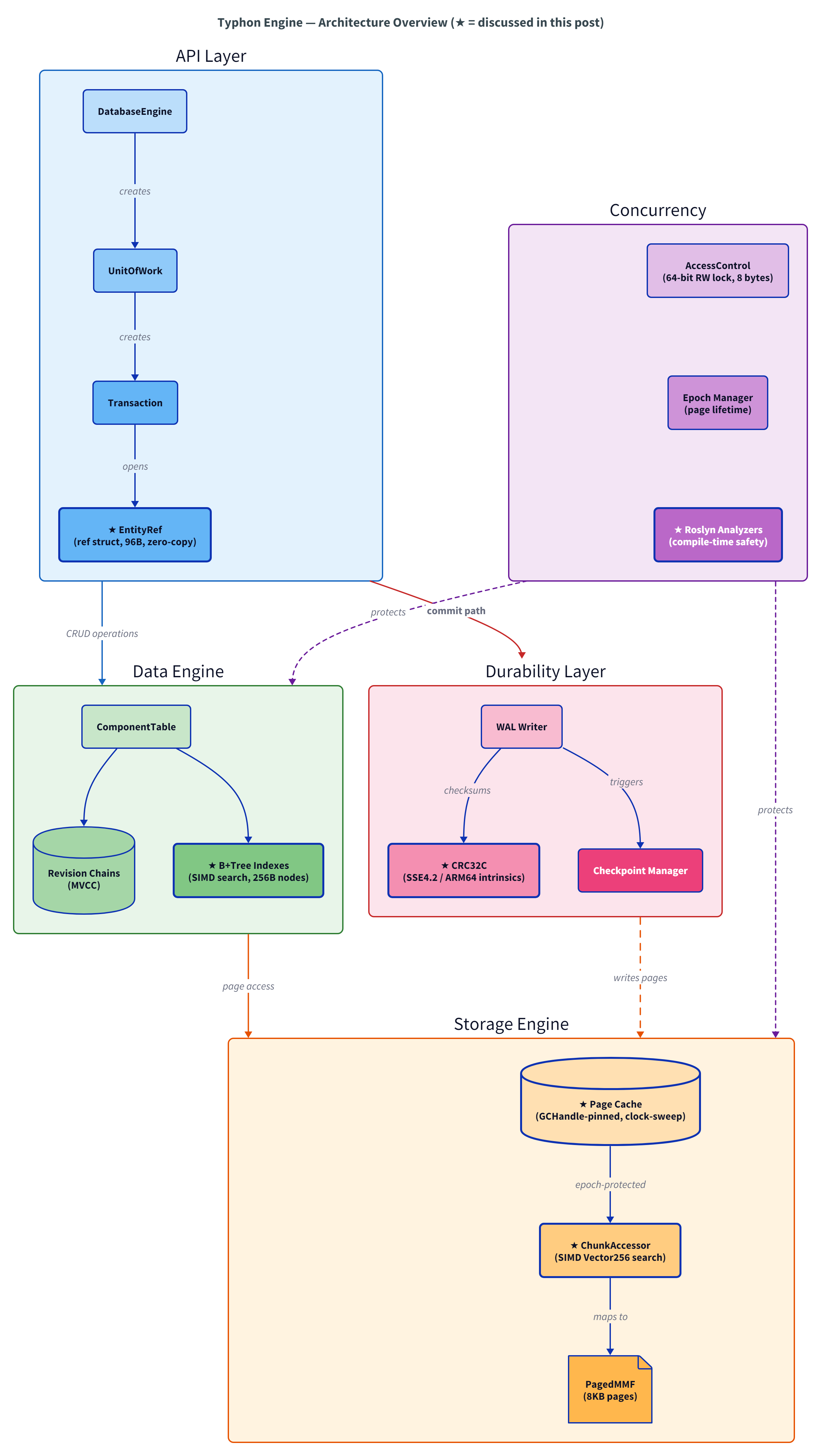

💡Typhon is an embedded, persistent, ACID database engine written in .NET that speaks the native language of game servers and real-time simulations: entities, components, and systems.

It delivers full transactional safety with MVCC snapshot isolation at sub-microsecond latency, powered by cache-line-aware storage, zero-copy access, and configurable durability.

Series: A Database That Thinks Like a Game Engine

- Why I’m Building a Database Engine in C#

- What Game Engines Know About Data That Databases Forgot

- Microsecond Latency in a Managed Language (this post)

- Deadlock-Free by Construction (coming soon)

GitHub repo • :mailbox_with_mail: Subscribe via RSS

The first two posts in this series covered the why and the what. Why C# for a database engine. What happens when you combine ECS storage with database guarantees.

This post is the how. Specifically: the five design principles that guide every performance decision in Typhon. Not a bag of tricks — a philosophy. Individual optimizations come and go as the engine evolves, but these principles are stable. They’re what let a managed language deliver sub-microsecond transaction latency.

When your tick budget is 16 milliseconds and you have 100,000 entities to process, every nanosecond of per-entity cost matters. And most of that cost comes from decisions made at design time, not runtime.

Principle 1: Control Memory Layout

Performance starts at the struct definition, not the algorithm. If your data layout causes cache misses, no algorithm can save you.

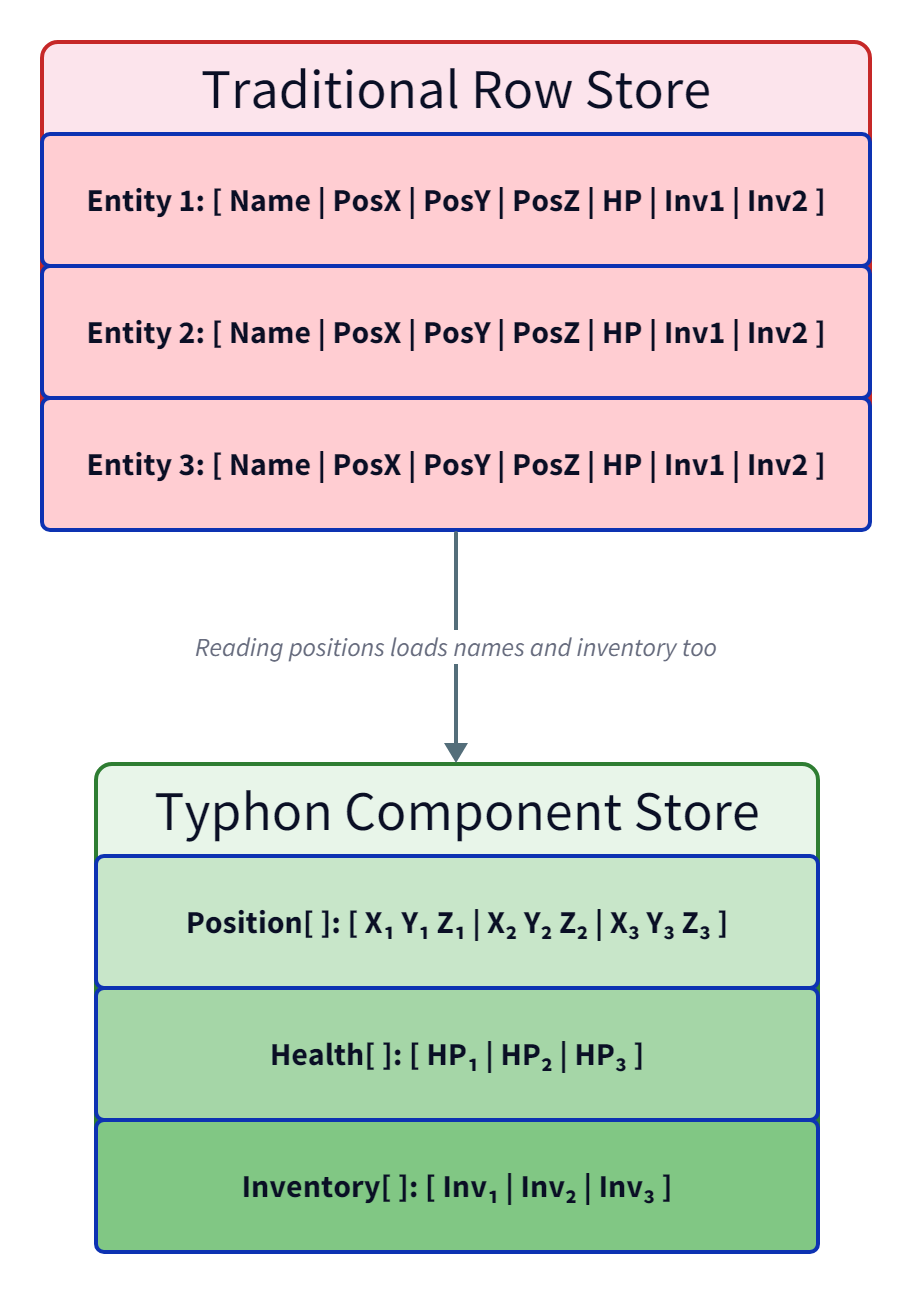

The most dramatic example: Typhon recently moved from per-entity hash-table lookups to cluster-based Structure of Arrays (SoA) storage. Same data, same queries, different memory layout. Measured on a Ryzen 9 7950X:

| Path | ns / entity | vs baseline |

|---|---|---|

| Standard EntityAccessor | 139 ns | 1.0x |

| ArchetypeAccessor (cached) | 94 ns | 1.5x |

| Cluster iteration | 2.5 ns | 55x |

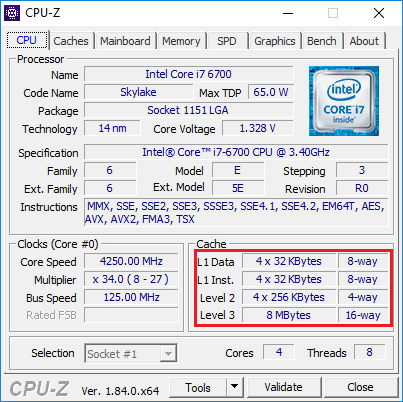

That’s a 55x improvement from changing memory layout alone. The reason: clusters pack N entities (8 to 64, auto-computed per archetype) in contiguous SoA memory. All positions together, all health values together. Every cache line the CPU loads is 100% useful data. For 100K entities, the working set dropped from scattered L3/DRAM access to ~2.5 MB that fits entirely in L2 cache — and L2 is 3x faster than L3 on Zen 4.

The cluster size isn’t a magic constant. An auto-tuning algorithm evaluates every N from 8 to 64 and picks the one that maximizes entities per 8 KB page for a given archetype’s component schema. Non-power-of-2 sizes often pack better: N=14 can yield 28 entities per page vs N=16 yielding only 16. The capacity is derived from the data, not from convention.

False sharing is the other side of layout control. When multiple threads write to adjacent fields, the CPU bounces the shared cache line between cores — a 40-60 cycle penalty per bounce. Typhon wraps mutable per-thread state in 64-byte padded structs. The WAL commit buffer goes further: explicit padding fields isolating the producer’s _tailPosition and the consumer’s _drainPosition onto separate cache lines. Seven unused long fields between them, suppressed with #pragma warning, because the correct layout matters more than the linter’s opinion.

The same hardware awareness drives B+Tree node sizing:

[StructLayout(LayoutKind.Sequential, Pack = 4)]

unsafe public struct Index32Chunk

{

// 256 bytes — fills four cache lines. Adjacent Line Prefetcher (ALP) on

// Zen 4+/recent Intel automatically fetches paired 64-byte lines within

// 128-byte regions, so two ALP triggers cover the full node.

public const int Capacity = 29;

public int Control;

public int OlcVersion; // bit 0 = locked, bit 1 = obsolete, bits 2-31 = version

public int PrevChunk;

public int NextChunk;

public int LeftValue;

public int HighKey; // B-link upper bound

public fixed int Values[Capacity]; // 29 × 4 = 116 bytes

public fixed int Keys[Capacity]; // 29 × 4 = 116 bytes

}

This struct is exactly 256 bytes because of the CPU’s prefetcher. The Adjacent Line Prefetcher on modern x86 fetches paired 64-byte lines within 128-byte aligned regions — so two ALP triggers cover the full node. A 256-byte node costs effectively the same as a 128-byte node in terms of memory access, but holds nearly twice the keys.

The capacity of 29 keys isn’t a round number because it isn’t derived from the algorithm. It’s derived from the hardware: 256 bytes of budget minus 24 bytes of header, divided across Keys and Values arrays. Typhon has three B+Tree variants — 16-bit, 32-bit, and 64-bit keys — and all three hit exactly 256 bytes with different capacities (38, 29, and 19 keys respectively). Post #1 mentioned 128-byte nodes. We’ve since moved to 256 bytes after measuring ALP behavior on Zen 4 — capacity went up, lookup latency stayed flat.

Principle 2: Eliminate Allocations on Hot Paths

In .NET, every allocation is a future GC event. On hot paths, the cost isn’t the allocation itself (~5 ns) — it’s the Gen0/Gen1 collection later that pauses unrelated threads. The discipline is simple: allocate nothing in steady state.

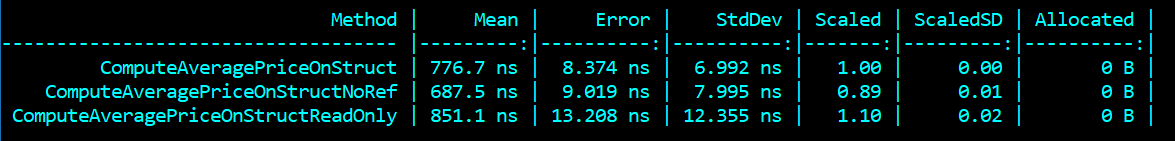

ref struct is the primary weapon. A ref struct lives on the stack, dies when the scope ends, and the GC never knows it existed. Post #1 showed EntityRef (96 bytes, inline component cache). But ref structs are a systematic discipline in Typhon, not a one-off optimization:

OlcLatch: wraps a singleref int— the B+Tree node’s version field. The entire optimistic lock coupling protocol (read version, validate, try-write-lock) in a struct that’s basically a typed pointer. Allocated millions of times per second during tree traversal, at zero GC cost.EpochGuard: RAII scope for epoch-based page protection. Enter and exit in 3.3 ns. Because it’s aref struct, it can’t be boxed, captured in a closure, or passed to async code — exactly the constraints you want for a scope guard.WalClaim: a Write-Ahead Log buffer claim containing aSpan<byte>that points directly into native WAL memory. Can’t escape to the heap by construction — the Span field makes it aref structautomatically.PointInTimeAccessor: a reusable snapshot attached to parallel workers. One per worker, stored in a flat array indexed by worker ID. Zero per-entity dictionary overhead — noDictionary<EntityId, T>on the hot path.

For short-lived buffers, stackalloc with a threshold pattern: stack-allocate when the array is small (under 64 elements), fall back to the heap otherwise. Most arrays stay small, so they never touch the allocator.

For larger long-lived buffers, the Pinned Object Heap: GC.AllocateArray<byte>(capacity, pinned: true). Pre-zeroed by the OS, never compacted by the GC, stable pointer for direct access. Typhon’s HashMap uses this for its entire entry array.

For medium reusable buffers, ArrayPool<T>.Shared. FPI compression rents 9 KB buffers, returns them in a finally block. Query execution rents stream arrays sized for the common case (8 slots), doubles if needed.

Four strategies — ref struct for scoped access, stackalloc for small temporaries, POH for large long-lived buffers, ArrayPool for medium reusable buffers. The result: zero hot-path allocations in steady state.

Principle 3: Reduce Memory Indirections

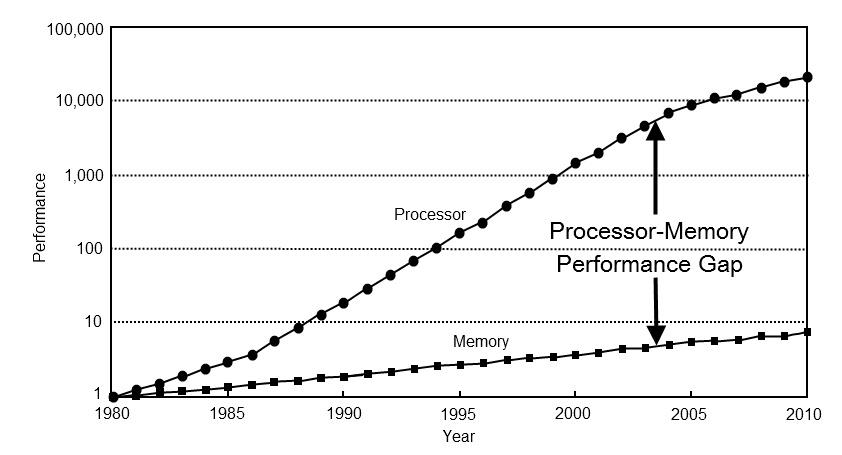

Every pointer chase is a potential cache miss. An L3 hit costs ~100 cycles, a DRAM miss costs ~200+. The goal: minimize the number of hops from “I want this data” to “here’s the data.”

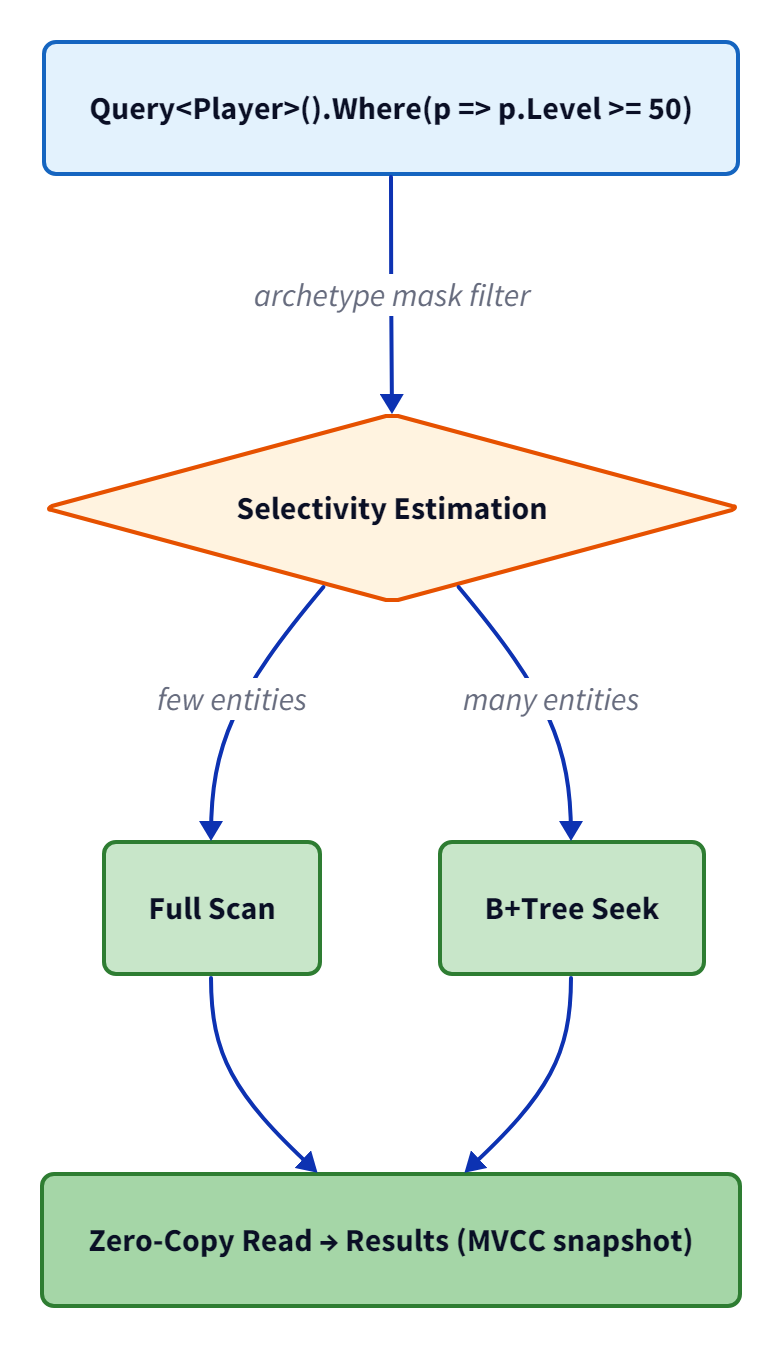

Post #1 showed the flagship example — the SIMD chunk accessor with its 3-tier lookup (MRU check, Vector256 search, clock-hand eviction). Each tier reduces indirection compared to the next.

Epoch-based page protection eliminates another class of indirection. The traditional approach: atomic increment on page access, atomic decrement on release. For N page accesses in a transaction, that’s 2N atomic operations — each one a potential cache-line bounce. Typhon uses epoch-based protection instead: one stamp when entering a transaction scope, one clear when exiting. Pages accessed within an active epoch can’t be evicted. Cost: 2 operations per transaction, regardless of how many pages are touched.

Zone maps eliminate entire clusters of indirection. Each indexed field maintains per-cluster min/max bounds. A range query like WHERE Level >= 50 checks two integers per cluster — if the cluster’s maximum is below 50, skip every entity in it without loading a single component byte. The impact at different selectivities, measured on 100K entities:

| Selectivity | Without zone maps | With zone maps | Speedup |

|---|---|---|---|

| 100% | 13.4 ms | 1.3 ms | 10x |

| 50% | 13.4 ms | 0.65 ms | 21x |

| 10% | 13.4 ms | 0.16 ms | 84x |

| 1% | 13.4 ms | 0.05 ms | 268x |

The float ordering trick makes this work for non-integer types: an IEEE 754 sign-flip converts floats to a representation where integer comparison order equals numeric order, enabling the same two-comparison interval overlap check regardless of field type.

At the other end of the scale, division elimination saves cycles on every single chunk lookup:

// Field: precomputed at segment creation

// Replaces expensive division (~20-80 cycles) with multiply+shift (~3-4 cycles)

private readonly ulong _divMagic;

// Constructor: compute magic multiplier once

_divMagic = (0x1_0000_0000UL + (uint)_otherChunkCount - 1) / (uint)_otherChunkCount;

// Hot path: every chunk lookup uses this instead of idiv

var pageIndex = (int)((adjusted * _divMagic) >> 32);

var offset = (int)(adjusted - (uint)(pageIndex * _otherChunkCount));

Integer division (idiv on x64) is notoriously slow — 20 to 80 cycles depending on operand size. The magic multiplier replaces it with a multiply and a shift: 3-4 cycles. The precomputation happens once when a segment is created; the benefit repeats on every one of the millions of chunk lookups that follow. Six lines of math, 20x speedup on a hot path. This is a classic systems programming trick that most managed-language developers have never needed — but when your per-entity budget is 2.5 nanoseconds, you need it.

Principle 4: Let the JIT Help

The JIT compiler is your optimization partner, not your enemy. Write code in patterns it can optimize, and it does work for you that you’d have to do manually in C or Rust.

Constrained generics give you monomorphization. When you write where TMask : struct, IArchetypeMask<TMask>, the JIT generates a separate native code path for each concrete type. ArchetypeMask256 (four ulong fields, bitwise operations) gets fully inlined — no vtable, no virtual dispatch. This is the same optimization Rust gets from generics, but opt-in through the struct constraint.

sealed enables devirtualization. DirtyBitmap and ArchetypeClusterInfo are both on hot paths and both sealed. The JIT knows no subclass can exist, so it converts virtual calls to direct calls and can inline them.

[AggressiveInlining] eliminates call overhead on micro-operations. B+Tree binary search, transaction state validation, every lock acquire/release — the overhead of a method call (save registers, set up stack frame, restore) is 2-5 ns. On a path called millions of times, that compounds.

SoA layout enables auto-vectorization. When a cluster is fully occupied (all N slots in use), the iteration loop becomes a simple sequential walk over contiguous SoA arrays with no branches. The JIT can auto-vectorize this on AVX2 — processing 8 floats per SIMD instruction. The SoA layout isn’t just about cache locality; it’s about giving the JIT a pattern it can vectorize.

But the most surprising JIT trick is dead-code elimination through static readonly fields:

// TelemetryConfig.cs — field declarations

/// <summary>

/// static readonly fields allow the JIT to eliminate disabled telemetry code paths

/// entirely. When a readonly field is false, the JIT treats guarded blocks as dead

/// code and removes them completely in Tier 1 compilation.

/// </summary>

public static readonly bool Enabled;

public static readonly bool EcsEnabled;

public static readonly bool EcsActive; // Combined: Enabled && EcsEnabled

// Static constructor — computed once at startup

static TelemetryConfig()

{

var section = config.GetSection("Typhon:Telemetry");

Enabled = section.GetValue("Enabled", false);

EcsEnabled = ecsSection.GetValue("Enabled", false);

EcsActive = Enabled && EcsEnabled;

}

// EcsQuery.cs — usage on hot path

if (TelemetryConfig.EcsActive)

{

activity = TyphonActivitySource.StartActivity("ECS.Query.Execute");

activity?.SetTag(TyphonSpanAttributes.EcsArchetype, typeof(TArchetype).Name);

}

When EcsActive is false, the JIT doesn’t just short-circuit the branch — it eliminates the entire if block from the generated native code. No branch instruction, no condition check, zero cost. The static readonly field, initialized in a static constructor, is treated as a constant after Tier 1 JIT compilation. The dead branch and everything inside it vanish.

This gives you zero-cost observability. Full OpenTelemetry tracing when enabled; literally nothing — not even a branch — when disabled. Most C# developers don’t know the JIT does this. It’s worth structuring your telemetry and feature flags around this pattern.

Principle 5: Design for the Hardware

The CPU manual is a requirements document. Cache-line size, SIMD register width, TLB coverage, memory bandwidth — these aren’t abstract numbers. They drive struct sizing, batch sizes, and allocation strategy.

Cache-line size (64 bytes on x86, 128 bytes on Apple Silicon) drives CacheLinePaddedInt sizing, B+Tree node alignment, and SoA array alignment. The ViewDeltaRingBuffer aligns each sub-buffer to 64-byte boundaries so that the hardware prefetcher doesn’t waste bandwidth loading adjacent unrelated data.

SIMD width determines batch sizes. Typhon’s SimdPredicateEvaluator uses three-tier CPU dispatch for filtering entities by field values: AVX-512 processes 16 integer comparisons per instruction, AVX2 processes 8, with a scalar fallback for older hardware. The AVX-512 path uses a workaround — .NET doesn’t expose 512-bit gather intrinsics, so it performs two 256-bit AVX2 gathers and combines them into a Vector512 for the comparison step. The JIT emits a native vpcmpd instruction for the 16-wide comparison. On Zen 4 (which double-pumps 512-bit operations), throughput matches two AVX2 iterations but with half the loop overhead.

Software prefetch hides memory latency where it matters most. During HashMap resize, speculative prefetch computes the future entry’s position in the resized table and issues Sse.Prefetch0 to start loading that cache line while the current entry is being processed. The JIT translates this to a prefetcht0 instruction — essentially free to issue, and it hides 100+ cycles of latency per entry.

BMI2 instructions accelerate spatial indexing. Morton key encoding (Z-order curves) uses Bmi2.ParallelBitDeposit to interleave X/Y coordinates in ~1 cycle. The scalar fallback costs ~10 cycles. Morton ordering places spatially adjacent grid cells at nearby array indices, improving cache locality during neighbor queries.

TLB coverage constrains working set design. Without 2 MB huge pages, x86 L2 TLB covers only 8-12 MB. Every access beyond that risks a 15-20 ns page walk penalty on top of the data access itself. Typhon’s cluster storage keeps 100K entities in ~2.5 MB — comfortably within L2 TLB coverage even without huge pages. For larger datasets, the page cache’s 8 KB pages and sequential access patterns keep the hardware prefetcher effective.

Memory bandwidth (~50 GB/s on Zen 4) is the ceiling for bulk scans. If your SoA component scan isn’t approaching this number, something is leaving performance on the table — unnecessary indirection, poor alignment, or branches that defeat the prefetcher.

All measurements in this post were taken on an AMD Ryzen 9 7950X with .NET 10, BenchmarkDotNet, release configuration.

The Numbers

Individual principles are nice. What matters is how they compound. Here’s what the engine actually delivers:

| Operation | Latency |

|---|---|

| Cluster iteration (per entity) | 2.5 ns |

| CRUD lifecycle (spawn, read, update, destroy, commit) | 2.95 μs |

| Transaction create-read-commit (100 entities) | 3.6 μs |

| B+Tree point lookup (10K entries) | 191 ns |

| Component read (1 MVCC version) | 703 ns |

| Component read (50 MVCC versions) | 720 ns |

| Uncontended RW lock acquire | 7.5 ns |

| Page cache hit | 5.5 ns |

| Chunk accessor MRU hit | 1.1 ns |

| Epoch enter/exit | 3.3 ns |

| Cascade delete 10K entities | 7.6 μs |

The version invariance number deserves a callout: reading a component with 50 MVCC revisions costs the same as reading one with a single revision. 703 ns vs 720 ns — within measurement noise. The revision chain design works.

These principles also scale to parallel execution:

| Workers | Tick time | Speedup | Efficiency |

|---|---|---|---|

| 1 | ~37 ms | 1.0x | 100% |

| 2 | ~18 ms | 2.1x | 104% |

| 4 | ~10 ms | 3.8x | 95% |

| 8 | ~5.3 ms | 7.1x | 89% |

89% parallel efficiency on 8 workers. The 16-worker result (6.7x, 42% efficiency) hits the L3 cache / CCD boundary on the 7950X — a hardware wall, not a software one.

To put these numbers in perspective, here’s the concurrency cost hierarchy that drives Typhon’s design decisions:

| Level | Cost | Example |

|---|---|---|

| 0: Thread-local | ~2 ns | TLS counter, local variable |

| 1: Uncontended atomic | 5-10 ns | AccessControl read latch |

| 2: Contended atomic | 20-140 ns | Multiple writers, same lock |

| 3: System call | 500-1000 ns | Timestamp via syscall |

| 4: Context switch | ~10,000 ns | Blocking lock, futex wait |

| 5: Oversubscription | 100,000+ ns | More threads than cores |

Each level is roughly 10x more expensive than the previous one. Typhon’s AdaptiveWaiter (spin → yield → sleep progression) keeps most contention at Level 2, avoiding the 100x jump to Level 4. The cache-line padding from Principle 1 keeps parallel workers from bouncing each other between Level 1 and Level 2. Every design decision maps to staying as low in this hierarchy as possible.

Trade-offs

Unsafe is unsafe. These techniques require unsafe code — pointer arithmetic, raw memory access, manual layout control. One bug can corrupt the page cache. Roslyn analyzers catch some classes of errors at compile time, but not all. The safety net has holes.

Complexity budget. Magic multipliers, SIMD evaluators, epoch-based protection, zone maps — each one is simple in isolation. The combination creates a codebase that demands systems-level understanding to navigate. There’s no shortcut around understanding the hardware.

Not all of this transfers. Most .NET applications don’t need microsecond latency. Using CacheLinePaddedInt in a web API is premature optimization. These techniques are for when you’ve measured, profiled, and confirmed that memory access patterns are your bottleneck — not before.

What’s Next

The next post dives into concurrency: “Deadlock-Free by Construction: How Typhon Eliminates Deadlocks Instead of Detecting Them.” Most databases treat deadlocks as a runtime problem — detect the cycle, abort a transaction, retry. Typhon makes deadlocks structurally impossible through a three-pillar mathematical argument. No detection, no timeouts, no retries.

]]>